AI & Machine Learning, AI Agencies

Is amazon SageMaker right for your business needs?

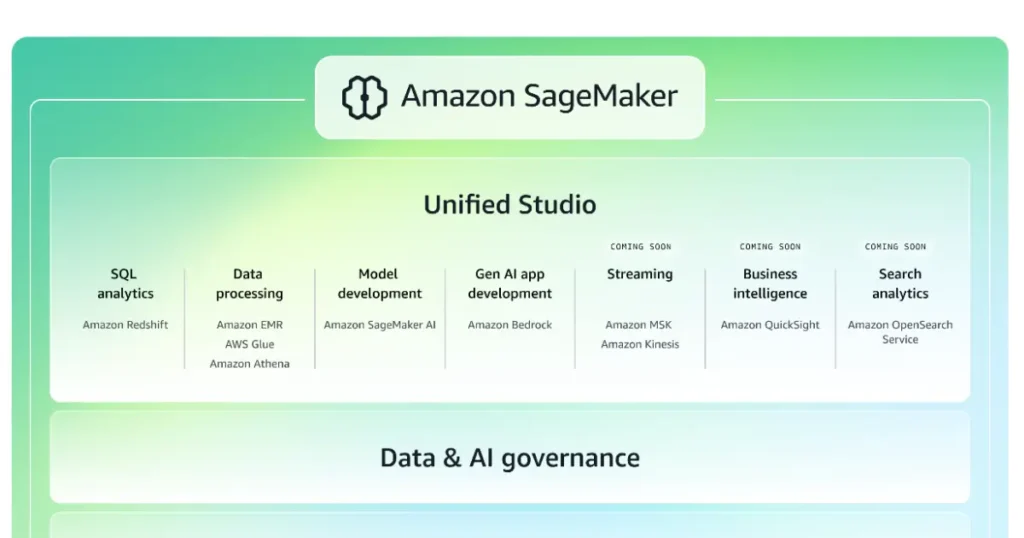

Amazon SageMaker is a cloud-based machine learning service provided by Amazon Web Services that helps organizations build, train, deploy, and manage machine learning models at scale. Amazon SageMaker is designed to cover the entire machine learning lifecycle, from data preparation and experimentation to production deployment and monitoring, without requiring teams to manually manage underlying infrastructure.

Amazon SageMaker Machine Learning Platform

Amazon SageMaker functions as an end-to-end platform for machine learning development and operations. It brings together data processing tools, development environments, training infrastructure, and deployment services under a single managed system. This approach allows data scientists and engineers to focus on model development and business logic rather than server provisioning and maintenance.

Because Amazon SageMaker is tightly integrated with the broader AWS ecosystem, it is commonly used by teams that already rely on Amazon Web Services for data storage, compute, and application hosting.

Amazon SageMaker Model Development and Training

Amazon SageMaker provides multiple ways to build and train machine learning models. Users can start with built-in algorithms, bring their own custom code, or work with popular machine learning frameworks.

Key training-related features of Amazon SageMaker include:

-

Managed training jobs with automatic resource provisioning

-

Support for common frameworks such as TensorFlow, PyTorch, and XGBoost

-

Distributed training for large datasets and complex models

-

Automatic scaling of compute resources during training

This flexibility allows teams to adapt Amazon SageMaker to both simple experiments and large-scale production workloads.

Amazon SageMaker Studio and Development Environment

Amazon SageMaker Studio is an integrated development environment that centralizes machine learning workflows. It provides a web-based interface where users can manage notebooks, experiments, training jobs, and deployed models.

Within Amazon SageMaker Studio, users typically:

-

Explore and preprocess data

-

Train and evaluate models

-

Track experiments and model versions

-

Prepare models for deployment

This unified workspace reduces context switching and helps teams maintain visibility across the machine learning lifecycle.

Amazon SageMaker Deployment and Inference

Once models are trained, Amazon SageMaker offers multiple deployment options depending on application requirements. These options are designed to support real-time inference, batch processing, and asynchronous workloads.

Common Amazon SageMaker deployment patterns include:

-

Real-time endpoints for low-latency predictions

-

Batch inference for large datasets

-

Serverless inference for intermittent workloads

-

Multi-model endpoints to host multiple models on shared infrastructure

These deployment options allow organizations to balance cost, performance, and scalability.

Amazon SageMaker MLOps and Model Management

Amazon SageMaker includes features that support machine learning operations (MLOps), helping teams manage models over time. This includes tracking experiments, versioning models, and monitoring performance after deployment.

MLOps capabilities in Amazon SageMaker include:

-

Model registry for version control and approval workflows

-

Automated pipelines for training and deployment

-

Monitoring tools to detect data drift and performance issues

-

Integration with CI/CD systems

These features are particularly valuable for organizations running machine learning models in regulated or mission-critical environments.

Amazon SageMaker Integration with AWS Services

One of the defining characteristics of Amazon SageMaker is its integration with other AWS services. It commonly works alongside:

-

Amazon S3 for data storage

-

AWS Identity and Access Management for security

-

Amazon CloudWatch for logging and monitoring

-

AWS Lambda and API Gateway for application integration

This ecosystem approach allows Amazon SageMaker to function as a central machine learning component within larger cloud architectures.

Amazon SageMaker Use Cases Across Industries

Amazon SageMaker is used across a wide range of industries and applications, including:

-

Recommendation systems in e-commerce

-

Fraud detection in financial services

-

Demand forecasting and optimization

-

Natural language processing for customer support

-

Computer vision for image and video analysis

Its flexibility makes it suitable for both experimental projects and large-scale enterprise deployments.

Amazon SageMaker Limitations and Considerations

While Amazon SageMaker simplifies many aspects of machine learning, it still requires planning and expertise. Teams must consider:

-

Cost management for training and inference workloads

-

Proper data preparation and feature engineering

-

Monitoring and retraining strategies

-

Security and compliance requirements

Amazon SageMaker is most effective when used by teams that have a clear understanding of their machine learning objectives and data pipelines.